In the lecture, we shortly brush on lexical aspects of Fortran. A quite ancient language, as far as programming languages are concerned.

We discuss some such aspects, namely keywords and in particular the treatment of whitespace in Fortran. Not because of specific interest in Fortran, but to highlight differences to more modern treatment. So to say, to learn from a “bad example” how to do it better.

Keywords

Both issues are very typical tasks of a lexer or scanner. Most languages have indeed a number of so-called keywords or reserved words (but not all languages), or maybe one should say: Fortran has keywords, but they are not at the same time reserved words. Lexemes like GOTO or FOR can get scanned into tokens for jumps resp. as part of a looping statement. But it’s also possible to use them as variables. For instance, GOTO = 5 can be a syntactically correct assignment as part of correct Fortran program. Note that in most languages, the character sequence GOTO would be acceptable as identifier (provided that capital letters are fine for identifiers), were it not that the scanner forces GOTO to be interpreted as token for a jump, not for an identifier, goto is reserved for that purpose. That’s an example of the fact, that scanners prioritize some matches over others: that’s what it ultimately means, to reserve some (key-)words for special usage, thereby disabling other uses.

Curiously, in Java, goto is a reserved but unused word, i.e., one cannot use it as variable, though there is no goto-statement in Java.

Whitespace

Another common aspect covered by lexers is whitespace. Similar to comments, white space is largely ignored: it’s not turned into a token, but the scanner jumps over the whitespace and comments until encountering subsequent non-whitespace or non-comment characters.

Being ignored in that way does not mean that whitespace is completely “meaningless”. It serves as separator between (other) lexemes. That’s in contrast with the treatment in Fortran, that. There, whitespace was not used for terminating a lexeme and thus serving as separator between lexemes. In Fortran, adding blanks or removing blanks made no difference at all.

What were they thinking?

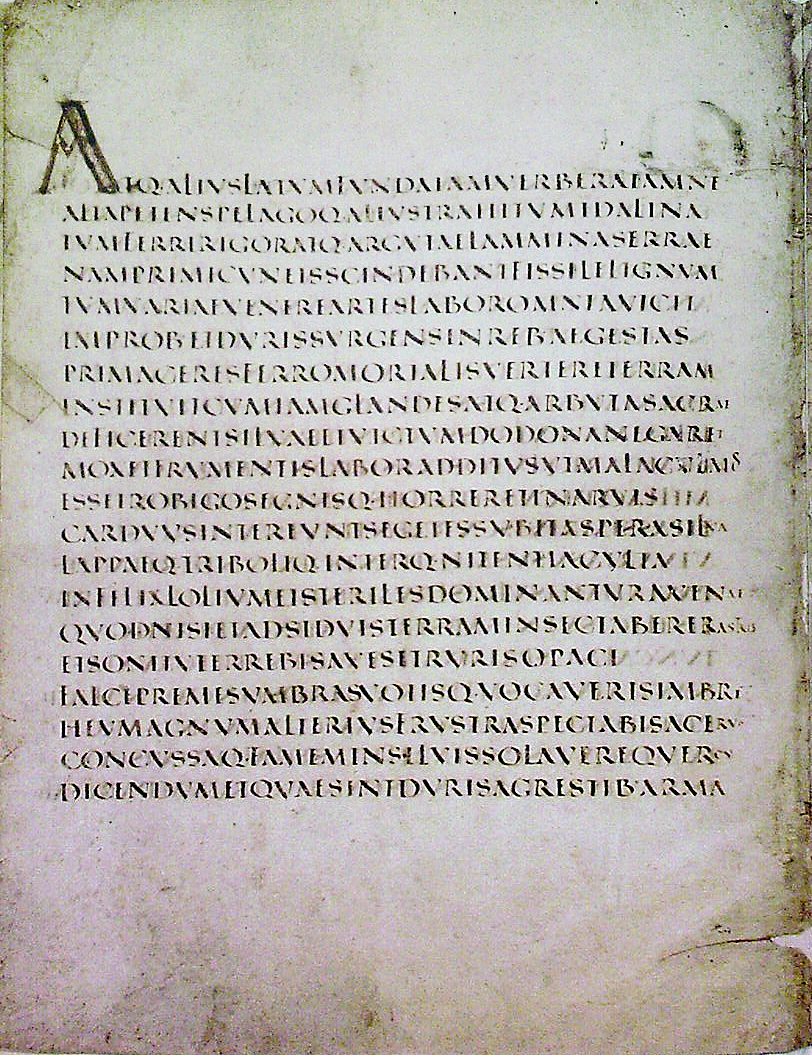

Why it was done like that, I can only speculate. Fortran was one of the first high-level programming languages, if not the first, then at least the first “wide-spread” one. Nowadays, treating whitespace like that is seen as a bad idea, but in those pioneering days, one may not have had much experience with good conventions for scanning or other parts of a compiler. Or language pragmatics was just not top of the list of language design priorities…. Later programming languages made better choices there. Actually, a similar thing happened for written (alphabetic) languages. In antiquity, languages were often written without whitespace (and without interpunctation); there are also languages outside Europe written like that. That form of scripture is called scriptura continua, here is an example:

Only over time, people figured out that whitespace and punctuation (and perhaps the use of capital vs. lower-case letters) helped structuring the text and improve readability.

For Fortran, it may simply not have been a conscious design decision. Perhaps the first robust implementation simply did it like that, filtering out whitespace completely. It could be that for the corresponding routine it seemed just the simpler or more straightforward implementation, or the first that came to the implementor’s mind.

Once, the first compiler was out and shipped to customers along with some IBM 704s or some other early mainframes, that treatment just stuck, it was “just how it was”. Afterwards it was fixed in early programming manuals, see for instance the reference manual for Fortran on the IBM704:

Blank characters, except in column 6, are simply ignored by FORTRAN, and the programmer may use blanks freely to improve the readability of his FORTRAN listing.

Once, things like that are fixed, they won’t die out (even if most would agree it had been a bad idea). But being backward compatible and to be able to still compile older software is an important goal. Maybe even the old-timers and Fortran veterans looked down on novelty things like treating whitespace as terminators and reserving words (muttering things like “I myself prefer to write very compact code, whitespace is for wimps”).

Another example where lexical conventions refuse to die out is COBOL. When I took a COBOL course at university, one thing still stuck in my memory is that it was important how to indent stuff properly, like certain things must be indented with exactly 4 blanks etc. Programs could look like that:

IDENTIFICATION DIVISION.

PROGRAM-ID. HELLO-WORLD.

PROCEDURE OUTPROC.

DISPLAY 'Hello, world'.

STOP RUN.

One had to follow the indentation requirements slavishly, it almost seemed to me that the art of COBOL programming focused on how to properly indent (the rest was easy because it could be understood). Then I found out why one has to do it: the reason for such an indentation regime was an inheritance from the time of punch-cards. I don’t know details how punch-cards dictated indentation for COBOL; here are some comments on stack exchange on the issue. Anyway, when that became clear, I decided to drop out from that course. I had signed up for that extra course about learning COBOL out of interest, but it turned out to be all-out boring for a number of reasons, not just because of indentation fetishism. It seems like that at some universities, proper indentation is still part of grading COBOL solutions (I don’t know how up-to date those grading instructions are, though).

In our context of Chapter 2 about lexing, indentation (and tabbing, etc.) is also often treated as whitespace. However, not as in traditional COBOL, where indentation matters. There are other languages where indentation has meaning, though that may be a matter of design, not because it’s a holdover from punch-cards.

Early orbital and interplanetary NASA missions (with or without Fortran punctuation and whitespaces)

In the lecture, we discuss lexical aspects of FORTAN as potentially obscuring, at least seen with today’s eyes. There are a number of apocryphal stories around FORTRAN and failed NASA missions. Probably also concerning other languages (including assembler). Maybe these stories also simply express a certain amount of schadenfreude: the high and mighty NASA embarks on a zillion-dollar mission to boldly go where no man ever went. Or perhaps Russians had gone there recently, which one heightened the pressure to shoot the damn thing up the sky. Only to see it go BOOOMM right after lift-off. Then a meek explanation for such an extravagant firework followed claiming that everything was engineered top-notch, only the computer wizards, at one single place in a huge, otherwise correct program, had mixed up unfortunately a dot with a comma. And the public chuckled at the thought that the brainy propeller-heads at NASA, highly trained in the esoteric witchcraft of programming, cannot even use proper punctuation. It’s a good story, it was probably told and retold and written about, and in the end, it was no longer so clear what exactly happened.

But it’s known anyway, that computer glitches brought down quite more than just one space mission, thought it’s not always attributed to a single dot or comma.

So one of the stories and code snippets (often repeated and told in different contexts) indeed uses Fortran and it involves punctuation. There was a piece of control software, which contained a loop that should have looked like the following:

DO 15 I = 1,100

What actually was written instead was

DO 15 I = 1.100

That changed the meaning drastically. As discussed, scanning FORTAN means throwing away all spaces, and the program was treated as

DO15I = 1.100

And, my bad, that’s an assignment to the variable DO15I and not a loop. This (and similar) stories exist in different variations. It has been told in connection with the interplanetary mission Mariner 1, though that seems unconfirmed; the cause of the Mariner 1 debacle is mostly attributed to a different silly error, not in connection with lexing, not even in connection with FORTRAN (sometimes a hyphen is mentioned, or an overbar). As origin of the FORTRAN loop-vs-assignment glitch is mostly seen not Mariner 1, but a different space program, namely project Mercury. That is seen by many as the most plausible origin story.

See for instance the link to Ars Technica, quoting info from the RISK digest and forum. This digest, over the years, actually since decades, provides solid background information and discussions concerning computer related hazards and accidents.