The lecture discussed types and type systems as a central part of a compiler’s static analysis or semantic analysis phase. Actually, the lecture does not really discuss much type systems nor type checkers. Most of the material is about individual more or less well-known examples of particular types, known from this or that or many languages. Type systems and type theory in general, is a huge, complex field, with both deep results and as well as practical applications; after all, all programming languages, perhaps with the exception of a few weird ones, come with a type discipline of one form or the other.1 Honestly, claiming to have scratched the field, even that is an exaggeration.

Type systems

As anyone knows, any given programming language support different types and indeed, all but the most primitive, antediluvian languages support infinitely many types by the fact that there are mechanisms to introduce new types from old ones. The lectures covered some of those type constructors, like array types, tuple types, record types, classes and many more. Note we are talking here about introducing new types, not about creating or obtaining data for a predefined type, it’s about introducing and using types themselves.

Of course, introducing a new type has to go hand in hand with the possibility to obtain data that inhabits that types, otherwise the new type would be useless and uninhabited. There is only one type for which one cannot create an inhabitant, that’s the empty type. It’s supported by some languages as the type void.

On second thought, that might not be 100% exact, there may be ``other’’ types without values in them. Apart from the fact that one could use the type void under a different name, using type synonyms or type aliases, a type like int * void, describing pairs of values where one is an integer and the other en element of void. So that type is best be considered to be equivalent to the empty type. If one allows equivalences like that, one indeed have semantically just one empty type, but it may be known under different forms or in different syntactic representations, like int * void. In the lecture, we discuss other possible equivalences, mostly in connection with structural vs. nominal typing.

Even if we do not show many examples of type systems, we made some general remarks about such systems and their properties. Especially we mention strong typing and safe typing.

The notions don’t mean the same, but seem related. Sometimes they may seem not mean much at all or one finds conflicting interpretations as to what they are supposed to mean or one finds that these terms are used almost synonymously. Sometimes they are used in connection with (or confused with) the the difference between static vs. dynamic typing, and also some distinguish between strict and loosely typed languages.

Here, I try to shed some light on those words, the way I see it. It’s largely a conventional view on the issues and terms , and it’s not some unique systematic view or definite classification. As we’ll see, some of the terminology is not intended as being exactly definable, it’s unavoidably fuzzy anyway.

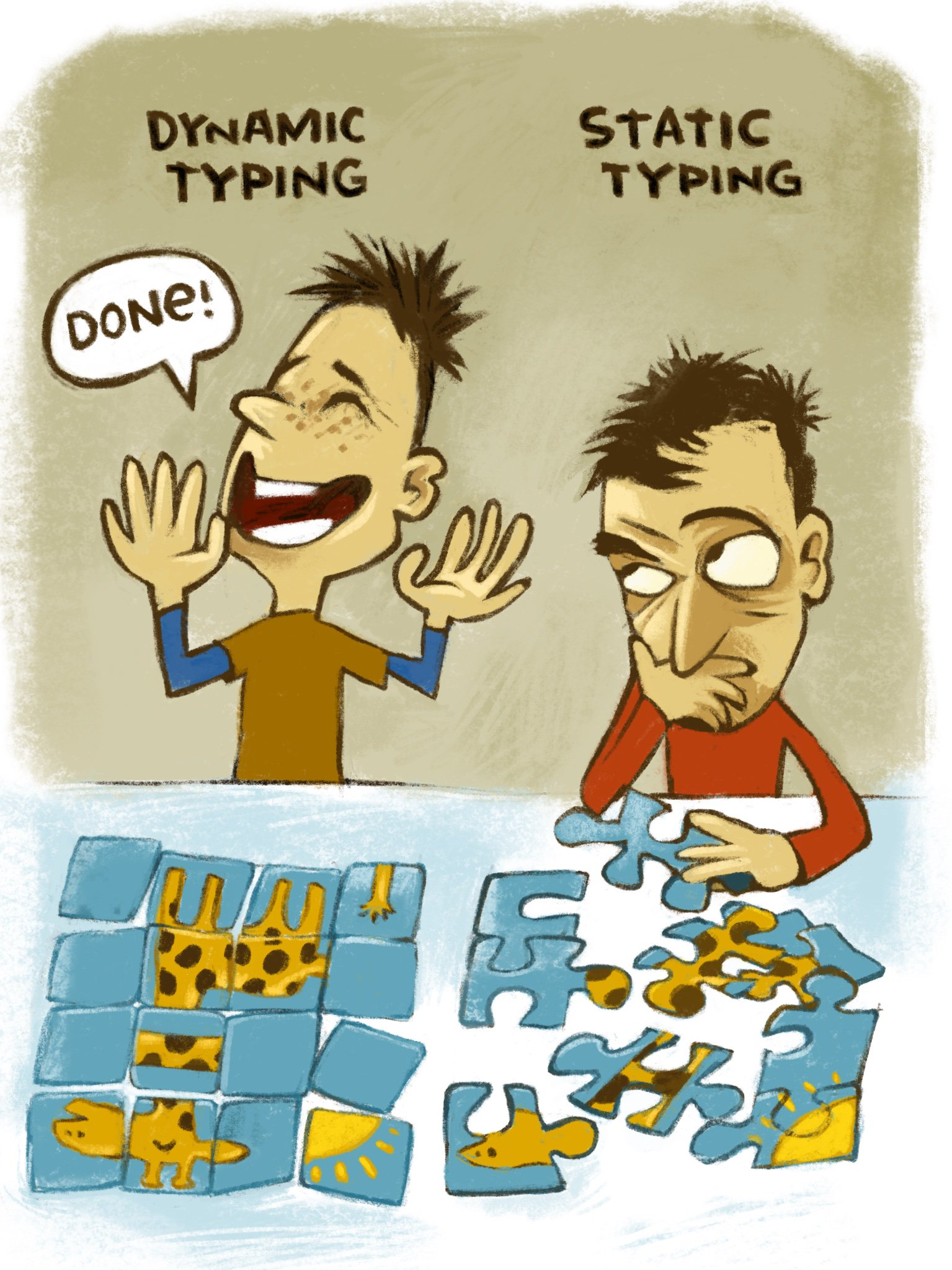

Static and dynamic typing

As said, basically all programming languages do have some sort of type system and thus some type checker. The distinction between static and dynamic typing is one of the clearer ones, there is not much controversy around that. A static type checker checks well-typedness (whatever is captured by the types) at compile time, as part of the static analysis or semantic analysis part. Dynamic type checking means that adherence to a type discipline is done at run-time. In a given language, it might not be a question of ``either-or’’, one can do static type checking for some aspects and dynamic run-time type checks for others.

As an example for that, probably known to the participants of the course, is the treatment of type casts in Java. Java is pretty much statically typed. However, the programmer can resort to type casts, telling the compiler that the type is in fact this or that.

This can be useful in situations, when Java’s type system is not smart (or strong? or loose?) enough to figure out the actual type, resp. it figures out a type too general to be useful in the given situation. For instance, the system might deduce some object is of type Object, which basically gives no information, whereas the user is sure, it must be something more specific. In this case, the programmer can down-cast the value to the more specific type, and the static type checker trusts the user’s wisdom and continues taking the user-provided type as given, not the weaker one it was able to infer by itself. With this help, the static type checker may be able to accept the program as (statically) type correct, and the lower phases of the compiler can continue to generate code.

The type system may trust the user’s insight, but only up-to a point: it may accept the program as (statically) well-typed, but inserts run-time type checks for that cast-situation, just in case it turns out not to be correct after all. If that occurs at run-time, i.e., of the inserted run-time type check fails, the run-time system with throw some exception. The exception may terminate the execution, but that’s vastly preferable over not having at least a run-time type check. One can switch off the run-time type checks for type casts in Java at one’s own risk, for instance for improved execution speed.

One finds languages which use both kinds of checking more extensively. Gradual typing is a word used for type systems which mix static and dynamic aspects, though Java’s use of dynamic typing does not count. Type casts in Java is too tiny a dynamic type checking corner in an otherwise static regime, that one would not call Java gradually typed. Also it’s more of a makeshift solution, offering the user a emergency hatch to circumvent sometimes the restrictions of an otherwise static type system. But it’s not a systematic ``gradual’’ typing regime.

The distinction between static and dynamic type checking might be sufficiently clear from that discussion around casting alone, and the lecture and in particular the obligs focus exclusively on static type checking anyway.

The following discussions below about strong vs. weak type systems or loose and strict ones are mainly done for static type systems. One rarely finds controversy concerning the strength or strictness of dynamic type system. Presumably that’s because in dynamically typed languages the type discipline are not really felt by the programmer, it may be mostly or all under the hood, so a programmer may be only vaguely aware that there is a type discipline at all. That goes so far that one finds statements like ``untyped languages are also called dynamically typed languages’’, for instance here at tutorialspoint. Actually, an truly authoritative source and reference textbook Types and Programming Languages by Benjamin C. Pierce seems to side with that view. The argument there goes that type systems simply are static. With this standpoint, there cannot be such a thing as a dynamic type system, and there is no need to speak about static typing, since typing is static, full stop. In this view, there are of course many untyped languages, or at least languages without a type system. The textbook admits “dynamically typed languages” is quite common terminology, it’s only that there it’s considered as an unfortunate misnomer. Better would have been to talk about run-time checks (involving type tags), but reserve the word type system for its classical use. See also the discussion thread on stackoverflow.

Anyway, it’s a dispute about words, conceptually, the notions of static vs. dynamic checks are clear and uncontroversial. More controversy, probably of the endless kind, on the other hand, is on what is better…

.

Strong and weak type systems

Now a separate classification, the strength of a type system; as mentioned, this categorization, like the next one about safety, it applied mainly on static type systems.

What then is a strong type system, and when is it weak? For this question, there’s almost an agreement that there is disagreement on the issue. And accepting that there are different, contradicting interpretations of what a strong type system is, it’s often recommended to avoid those terms altogether as being unclear.

When characterizing a language’s type system as ``strong’’, the most common interpretation seems to the be the following. Being weak means like having weaknesses in what a static type system is supposed to do: giving static guarantee about the absence of certain run-time errors, like corruption due to incompatible data representations. In that view, a type system is weak if it contains loop-holes where it fails to give reliable guarantees,

We have seen examples of that in the lecture: For instance, the union type in C and the slight improvement in Pascal can easily be used to create programs with illegal type mismatches. For C, that ``weakness’’ if one calls it like that is understandable and acceptable, given the fact, that C and its type system was never designed or intended to abstract completely away from the underlying bytes, words, and the memory layout in general. For Pascal, there are less excuses. C stands a bit in the tradition of the more or less truly untyped languages BCPL and B; Pascal, on the other hand, was perceived (and criticized) for being rigid and strict in various aspects. This criticism came especially from C-programmers; both Pascal and C had attracted widespread adoption. As far as Pascal went, having a quite rich but rigid and inflexible regime without the payback in the form of robust run-time guarantees was perhaps less excusable than the situation in C. One well-known criticism came from Brian W. Kerngihan Why Pascal is not my Favorite programming language, certainly a member of the C- and not the Pascal-camp… Note in passing, that paper actually refers to Pascal as ``(almost) strongly typed’’.

One should not forget, however, that now is now and then was then. In the literature of that time one finds comparisons between C and Pascal, that classify both as strongly typed and one finds that view still today. Perhaps it’s just a case in point, namely that strong typing does not mean too much. What is considered a strong typing discipline at some point is later, as time goes by, not really that strong, especially in comparison with newer designs.

Coming back to the tagged record types of Pascal: as discussed in the lecture, that data structure, enabled the user of records a more easy protection than as with C-structs, but the protection was so checked or enforces, so the programmer can easily circumvent that. That is sometimes referred to as Pacscal’s type hole for variant records (there are more); for C one would not bother to refer to the ``weakness’’ of the type system in connection with structs a type hole, probably because C’s type system never had the ambition to give much protection against such kind of errors.

Much more notorious is Eiffel’s type hole, a language designed as supporting advanced (typing) concepts, well-founded on state-of-the-art theory, and even supporting design and verification. Eiffel is pioneering in supporting design-by-contract. So it was known for all kinds of advanced and ambitious stuff. It was also known, however, for some weakness in the type system, the mentioned type hole. It had to do with the typing of methods, in particular for arguments when overriding methods. Probably, the hole in Eiffel’s type system is the most notorious of all ``weaknesses’’ of known type system. It in particular led to a controversy (and attempts in Eiffel to repair or patch the hole, though not by dynamic typing). Here is not the place to dig deeper into the “covariance vs. contravariance“-debate, as it is known. The wiki pages here recount some of the controversy, with links to further sources.

In the discussion here, the strength of a type system is related to the extent, the type system can give guarantees to prevent certain run-time errors, or at least give assistance to the programmer to prevent those. So the weakness, in this view, is measured by the amount of loopholes it leaves that may lead to errors, in particular corruption of memory. To say the same thing differently: strength is the extent to which the type system can maintain the abstraction captured by the type. For the union types, to which extent the language maintains or even enforces that union or sum types represent that abstract concept of an alternative. In C or Pascal, that concept is only partially realized: a union type of A and B represents, when used with care, a value of A or alternatively of B, but it may also be some random surprise or crashed program. That’s the type hole.

But that’s actually only one interpretation of being strong or weak, maybe the most common one. However, such guarantees (or their lack) is better captured by the notion of type safety. See the section later.

Another interpretation of a type system being strong may be to be advanced compared to other type systems in use. We mentioned that at the heydays of Pascal and C, some classified their type systems as being strong.

There are still other interpretations. One is to think a type system is strong because it imposes strong restrictions on what the programmer is allowed to do and what not. That can be kind of correlated with the safety guarantees it can offer. To be very restrictive could make it possible to not allow many loopholes, or maybe none at all. At least with a very rigid discipline, it’s easier to achieve for the compiler. On the other hand, giving the programmer mostly a free hand makes it much harder to give guarantees that nothing goes wrong: the more freedom the programmer has, the more can go wrong as well.

So this view of being strong correlates with assuring or increasing safety, or hopefully it correlates: what’s the point in restricting the programmer’s freedom, if there’s no payback in terms of increased safety, and it makes no sense to restrict to do useful things without restricting also the possibilities to shoot oneself in the foot. But a type system strong in that sense is better called strict or restrictive or rigid or inflexible…

And there is still another way how to interpret what it means to be strong resp weak. One could call a type system strong if it allows do do fancy things, being flexible and expressive, without introducing loopholes. A system giving the programmer a great degree of freedom and, and at the same type, not compromising of type safety, is certainly not ``weaker’’ that one that that simply forbids flexibility for the sake of playing it safe. In that interpretation, the ``strength’’ is a measure of the expressiveness of the type system and often related to different forms of type polymorphism.

To sum up this part. To be strong for a type system has different, partly overlapping and even partly contradictory interpretations. Overall it may not mean not much more than being ``good’’ or ``advanced’’ is some way. In that sense it’s better treated as not much more of a marketing term. If one rolls out a new programming languages, which advertises its type system as an important part, one better calls the language ``modern’’ and the type system ``strong’’. Whatever it means, it sounds at least better than weak and outdated… See also the discussions on the issue on stack exchange.

Type safety

Safety is another criterion for type system. When discussing different interpretations of being strong, we touched upon type safety already. Being type-safe actually is uncontroversial, it’s pretty clear what it means, and it has a technical content. Let’s focus on static type system, so being type safe is meant as being statically type safe, which is the conventional interpretation. And this in the following types and type systems means static types and static type system, unless stated otherwise.

Types are static abstractions of run-time reality. I.e., they predict what will happen at run-time. As exact predictions of run-time behavior is impossible, one has to content oneself with approximate predictions. This approximation is implied by the term ``abstraction’’: it’s abstract in that it ignores and abstracts away from details. For example, $42 is a concrete run-time value, that may occur at some point in some int-typed variable. It’s much easier to predict the abstraction int than any concrete specific value.

There is of course many details and properties one can ignore or abstract away from. Being

There may be unsafe corners in the language, but the unsafe areas are well-defined, and no type holes lurk in dark and unclear corners of the language

You may also read the longer and more detailed blog post about type safety.

Type

The philosophy of BCPL is not one of the tyrant who thinks he knows best and lays down the law on what is and what is not allowed; rather, BCPL acts more as a servant offering his services to the best of his ability without complaint, even when confronted with apparent nonsense. The programmer is always assumed to know what he is doing and is not hemmed in by petty restrictions.

Well-typed program’s can’t go wrong. (Robin Milner)

A Theory of Type Polymorphism in Programming What does it mean when reading that a language has a ``strong’’ type system, is strongly typed, or is type safe? The latter, type safety, is more clearly defined. It’s also connected to Milner’s dictum. It is meant to be a statement about static typing and static type system. Types, as mentioned, can be understood as abstractions. As far as static type systems are concerned, these abstractions are also predictions of what will happen at run-time. The predictions are not exact, it’s approximate. For example, typically the type system cannot determine, which concrete integer will be given back as a result of a function, but it can determine that the result will be an integer, it’s just unclear at compiler time which it will be. That hangs together with fundamental limitations of what can be algorithmically determined (Halting problem, Rice theorem). But also more obvious reasons. Let’s stick with example of determining what value a function will return. A function will have an input, otherwise, what’s the point of having a function or procedure, and typically the behavior of the function, in particular the resulting value of the function will depend on the input (otherwise again, what would be the point of having a function). If one

In contrast to (standard) types: many other abstractions in static analysis (like the control-flow graph or data-flow analysis and others) are not directly visible in the source code. However, in the light of the introductory remarks that ``types’’ can capture a very broad spectrum of semantic properties of a language if one just makes the notion of type general enough (``ownership’’, ``memory consumption’’), it should come as no surprise that one can capture data flow in appropriately complex type systems, as well…

Besides that: there is no really any truly untyped language around, there is always some discipline (beyond syntax) on what a programmer is allowed to do and what not. Probably the anarchistic recipe of ``anything (syntactically correct) goes’’ tends to lead to disaster anyway. Note that ``dynamically typed’’ or ``weakly typed’’ is not the same as ``untyped’’.

Footnotes

1 One more or less well-known example of untyped language is BCPL (Basic CPL or Basic Combined Programming Language). Or see also here. Likewise the language B, which was influenced by BCPL, and which in turn is a predecessor of C…). The description of B explicitly states, that B is a typeless language. Some put forward the argument that the languages are not in fact typeless or untyped, but uni-typed, which means there is exactly one type for everything. While actually some arguments about that seem to get heated, it seems like splitting hairs to me.